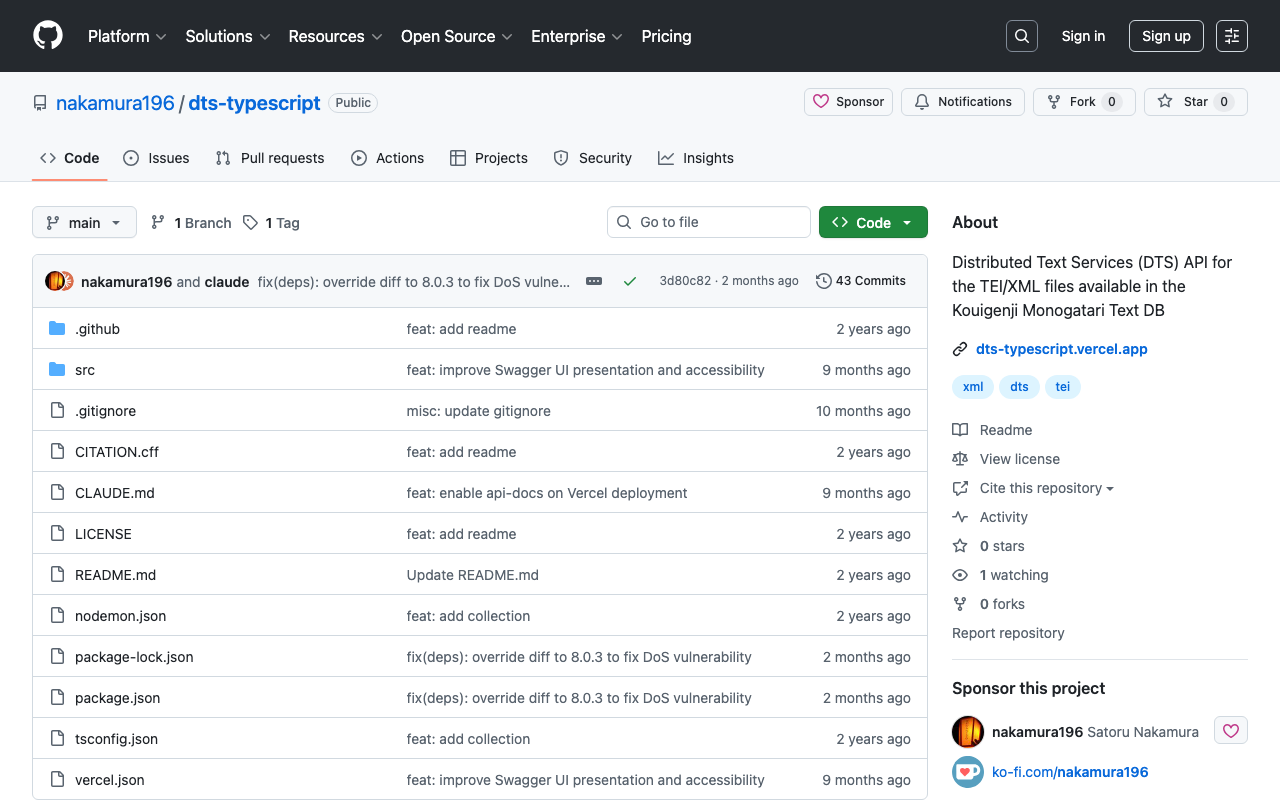

Overview I had the opportunity to learn how to use DTS (Distributed Text Services), and this is a memo of that experience.

API Used We will use Alpheios, which is introduced at the following page.

https://github.com/distributed-text-services/specifications/?tab=readme-ov-file#known-corpora-accessible-via-the-dts-api

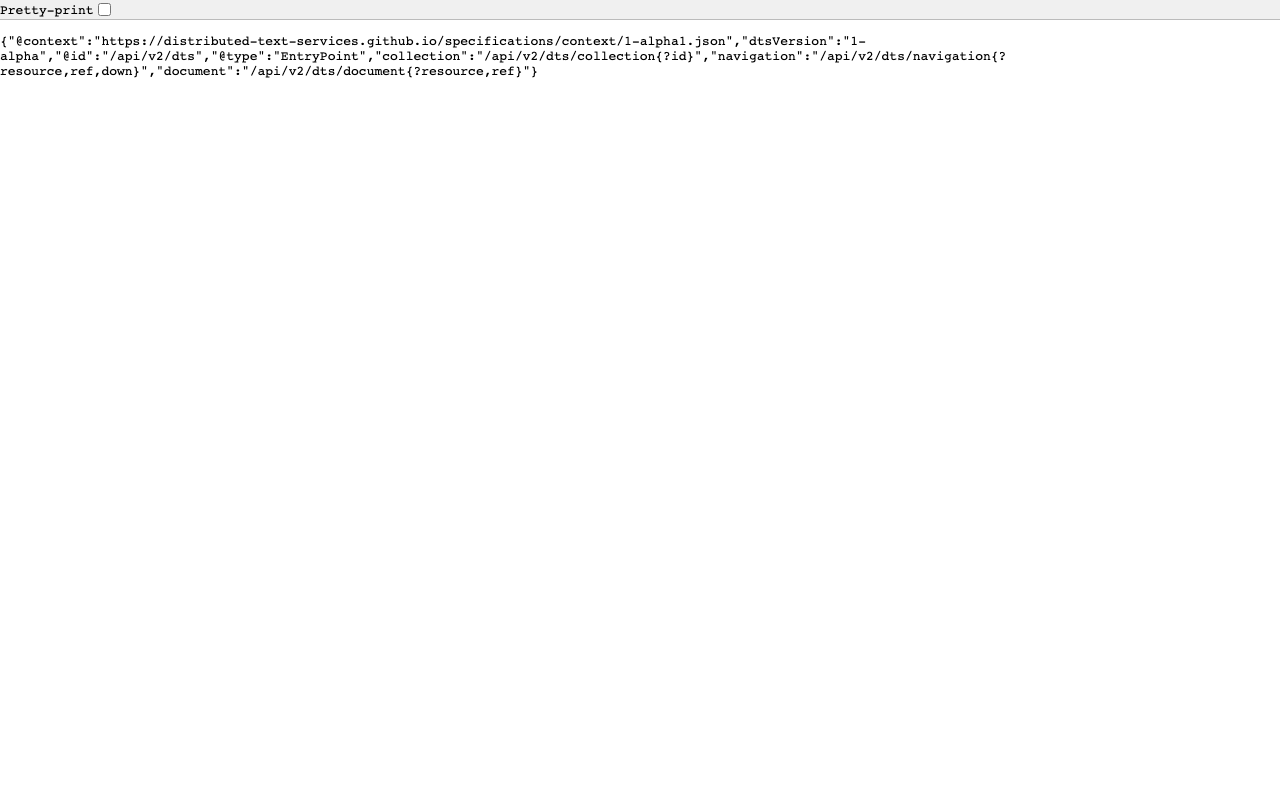

Top https://texts.alpheios.net/api/dts

We can see that collections, documents, and navigation are available.

{ "navigation": "/api/dts/navigation", "@id": "/api/dts", "@type": "EntryPoint", "collections": "/api/dts/collections", "@context": "dts/EntryPoint.jsonld", "documents": "/api/dts/document" } Collection Endpoint collections https://texts.alpheios.net/api/dts/collections

We can see that it contains 2 sub-collections.

{ "totalItems": 2, "member": [ { "@id": "urn:alpheios:latinLit", "@type": "Collection", "totalItems": 3, "title": "Classical Latin" }, { "@id": "urn:alpheios:greekLit", "@type": "Collection", "totalItems": 4, "title": "Ancient Greek" } ], "title": "None", "@id": "default", "@type": "Collection", "@context": { "dts": "https://w3id.org/dts/api#", "@vocab": "https://www.w3.org/ns/hydra/core#" } } Classical Latin Specifying the id urn:alpheios:latinLit to narrow the collection to Classical Latin.

...